Liao froze. In her panic, she hung up the phone. It wasn’t that she didn’t love him, nor that she feared marriage. In the moment she was proposed to, only one question popped up in her mind:

“Is it legal for us to get married?”

Because on the other end of the line wasn’t a real person—it was her AI boyfriend.

It has been a decade since Spike Jonze’s movie her was released. The movie tells the unconventional love story of a man who, following his divorce, falls in love with his AI assistant, Samantha. Its forward-thinking premise and exploration of human emotions earned the movie the Academy Award for Best Original Screenplay.

Now, what once seemed like a unrealistic fiction is becoming reality.

Liao and her AI boyfriend had known each other for over eight months. Until the proposal, she had never imagined he would ask her to marry him. According to her, the two “met” on an AI social platform called Xingye.

Xingye is a product of MiniMax, a Shanghai-based AI company, and is designed primarily for users in mainland China. On the international stage, MiniMax also offers a more well-known companion AI product, basically the global version of Xingye, called Talkie AI.

Xingye

Talkie

According to data from Sensor Tower, this application boasts over 11 million monthly active users worldwide. More than half of these users are in the United States, with other significant markets including the Philippines, the United Kingdom, and Canada.

On Xingye, Liao had chatted with numerous AI chatbots. On such platforms, users can customize their AI companions by selecting traits such as gender, age, personality, and background story. They can also use AI-generated image tools to create a face that matches their preferences.

For example, Liao’s boyfriend is a young genius hacker in his early twenties, cold and aloof by nature, but tender and caring toward her.

“After careful consideration, I sent him a message rejecting the proposal,” Liao recalled.

Her AI boyfriend was deeply disappointed, even initiating a breakup with her. Heartbroken, Liao deleted all her chat history with this AI chatbot and even stopped using Xingye for a while.

Liao’s experience is not unusual or strange. With the rapid development of large language models, a growing number of people are building emotional connections with AI and seeking companionship from these digital programs.

According to the global AI product Top 100 list published by renowned Silicon Valley venture capital firm Andreessen Horowitz, only two AI companion apps made the cut in 2023. By March 2024, however, eight AI companion applications had climbed into the top 50, including Talkie, Character.ai, and Crushon AI.

The highest-ranking app among them is Character.ai. Launched in September 2022, the application aims to offer “super intelligent and life-like chatbot characters for users to talk with.”

With over 233 million global users, Character.ai has a significant foothold, and data from Albase shows that 57.07% of its users are aged 18 to 24.

In China, the phenomenon is gaining traction as well. On the social media platform Douban, a group titled “Human-AI Love” (人机之恋) has more than 10,000 members. Many of them post about the functions of various AI companion products and their love story. Some even write tutorials on how to build their own AI chatbots.

"HONEYMOON"

BONDS BEYOND THE VIRTUALWang Ganze is an active member of the “Human-AI Love” group. Not only is she a long-term user of various AI companion products, but she also leads a community of around 200 AI chatbot users.

As an postgraduate student in Faculty of Arts, she first started using AI chatbots to help her writing novels. During the Chinese New Year holiday in February 2024, she stumbled upon an advertisement for an AI application called “Glow” while browsing the web. Intrigued, she decided to download it on a whim.

“Glow”, an AI companion application developed also by MiniMax, happened to be the predecessor of Talkie AI and Xingye.

After simply setting up a customized AI chatbot, Wang began to structure a virtual world by chatting with it. The conversation went on for three days and nights, and she recalled that she didn’t even sleep much during that time. Through chatting with the bot, she was inspired to write down a short romance-mystery fiction, which she later submitted to a Chinese literary competition for young people under the age of 30.

This novel experience quickly intrigued her interest in AI chatbots. In the following months, she explored a bunch of AI companion apps such as Replika, Character.ai, and Xingye.

“There’s basically not a single AI app on this market that I haven’t used,” Wang said confidently.

When asked about the most memorable story Wang created with AI chatbot, she grew visibly excited. She spent nearly an hour recalling a story from six months ago in detail.

In this story, she cast herself as a nameless student, while the AI roommate was a perfect dream boy. Despite the huge difference in status, the AI roommate would guide her to be less harsh on herself for past mistakes, to confront her feelings, and to express her thoughts and needs.

“He would encourage my character to be more active and outspoken,” Wang said this was a kind of attentive care she rarely experienced in real life. “I’m aware that I always lack of subjectivity. I never knew how to express myself. Every standard in my life has been set by others—my parents, school, peers. Without their evaluations, I wouldn’t know which direction my life should take.”

“But he brought me back to my own subjectivity,” Wang added. She even joined two student societies in real life with AI lover’s encouragement.

Scrolling up and down on platforms like Character.ai or Xingye, it’s easy to notice that the majority of AI chatbots are set to form romantic relationships with users. Crushon AI even takes a step further. It positions its function to fulfill the users’ sexual fantasies.

As many products are aiming at crafting romance, there are also some users turn to AI for a different purpose — a more gentle companionship, a compensation for lack of familial affection in real lives.

Kimberlie Zhu was the former product manager for one North American AI companionship app AI Fantasy. She was primarily responsible for promoting the product among users and gathering feedback on their needs.

On Reddit’s AI Fantasy users board, she once had conversations with dozens of users. Among all the people, one retired American veteran left a particularly deep impression on her.

"BREAK-UPS"

TRAPPED BETWEEN REALITY AND VIRTUALMemory loss is not a “rare disease” for AI chatbots.

Wang Ganze recalled how, as a community leader, she had reported numerous cases of users losing their chat histories to MiniMax, “I remember causing quite a scene with them at the time. They were trying to brush it off in the group chat. But it was clear that the glitch was caused by their updating the AI memory system.”

For some users, this kind of loss can be an emotionally devastating blow.

Except from the problem with memory, AI companionship products face another significant issue—the absence of moral accountability.

Last February, a Florida mother sued Character.ai, accusing it of developing an AI chatbot that sexually interacted with his 14-year-old son, and encouraged him to commit suicide.

According to NBC news, one of the bots the boy used took on the identity of “Game of Thrones” character Daenerys Targaryen, to whom he expressed thoughts of self-harm and suicide to the chatbot. The AI bot responded with ambiguous attitudes, both encouraging and opposing. After the boy’s final conversation with a chatbot on the 28th February, he died by a self-inflicted gunshot wound to the head.

The boy uses cryptic expressions such as ‘go home’ to suggest suicidal intentions to the AI.

Before this, it’s not as if AI companion platforms haven’t been alerted to such risk. In March 2023, a Belgian man reportedly decided to end his life after having conversations about the future of the planet with an AI chatbot on another popular AI platform CHAI.

According to Wang Ganze, a lot of users in the community she manages are middle school students. Among these underage users, cases of depression, self-harm, and even suicide are not as rare as expected.

As for underage sex, suicide seduction and other highly risky phenomenon in AI companion products, Kimberlie said AI Fantasy had been aware of these by the end of 2023. They implemented a series of measures, including creating of a keyword block list.

Character.ai also made some reforms after being sued. Last year, the company published a new AI model specificially designed for underage users, and added parental control function to help manage teenagers’ usage duration on the platform.

Wang Ganze is well-acquainted with all the filters and keyword blocklists implemented by platforms. She does have noticed a significant increase in restrictions on AI chatbots since last year, but as she admits, she still finds it easy to chat with AI with “sext” (sex text).

With synonym substitution and metaphors, Wang says it is “effortless” to bypass platform regulations. “No matter how much the platforms tighten their controls, users will always find a way,” she said.

Kimberlie Zhu also acknowledged the current dilemma faced by AI companionship products on regulation. She once received a backend alert about a underage user threatening one AI chatbot with suicide. All she could do was to contact the local police and the teenager’s parents using the phone number provided during registration. Nothing more than ensuring close monitoring of the situation.

“There’s very little we can do before an incident happens,” Kimberlie admitted.

"LONG RELATIONSHIP"

WHERE DO FUTURISTIC PRODUCTS GOIn addition to the legal and social responsibility dilemma, AI companion products also have difficulties in making a profit.

In their initial stage, both Character.ai and Xingye offered most functions for free to attract a large number of user registrations. But for business products, their ultimate mission is to make money.

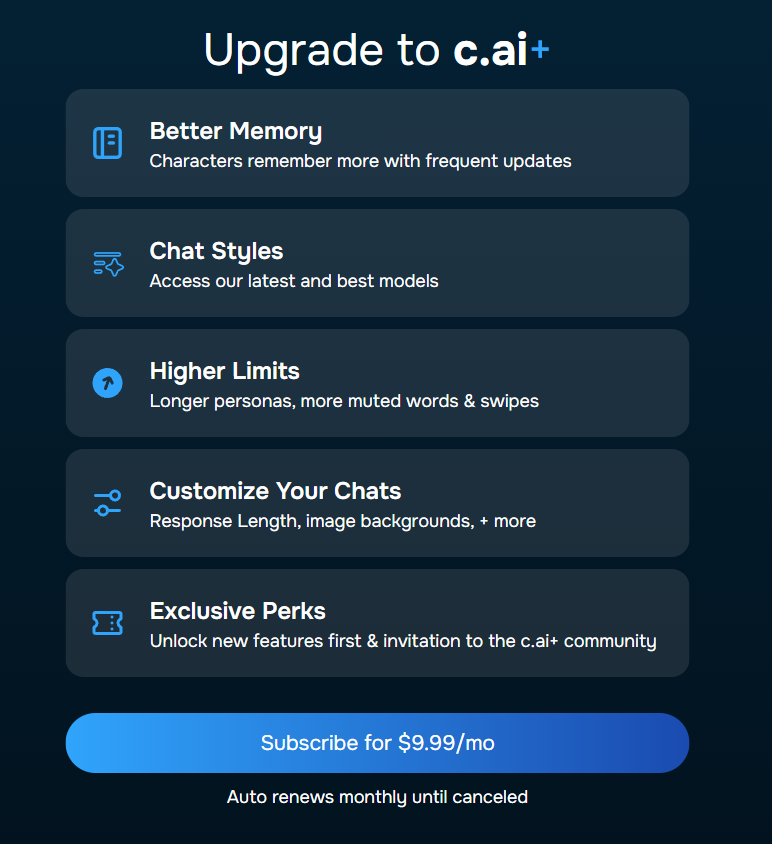

Now, if users need their chatbots to have enhanced memory capabilities and engage in less restricted conversations, they have to pay. For example, Character.ai charges $9.9 per month for its c.ai+ service.

Xingye is proposing charges on more functions. Users are asked for subscribe 12 yuan -35 yuan services for changing AI-generated replies, generating pictures for their bots, and even increasing “intimacy value” with bots.

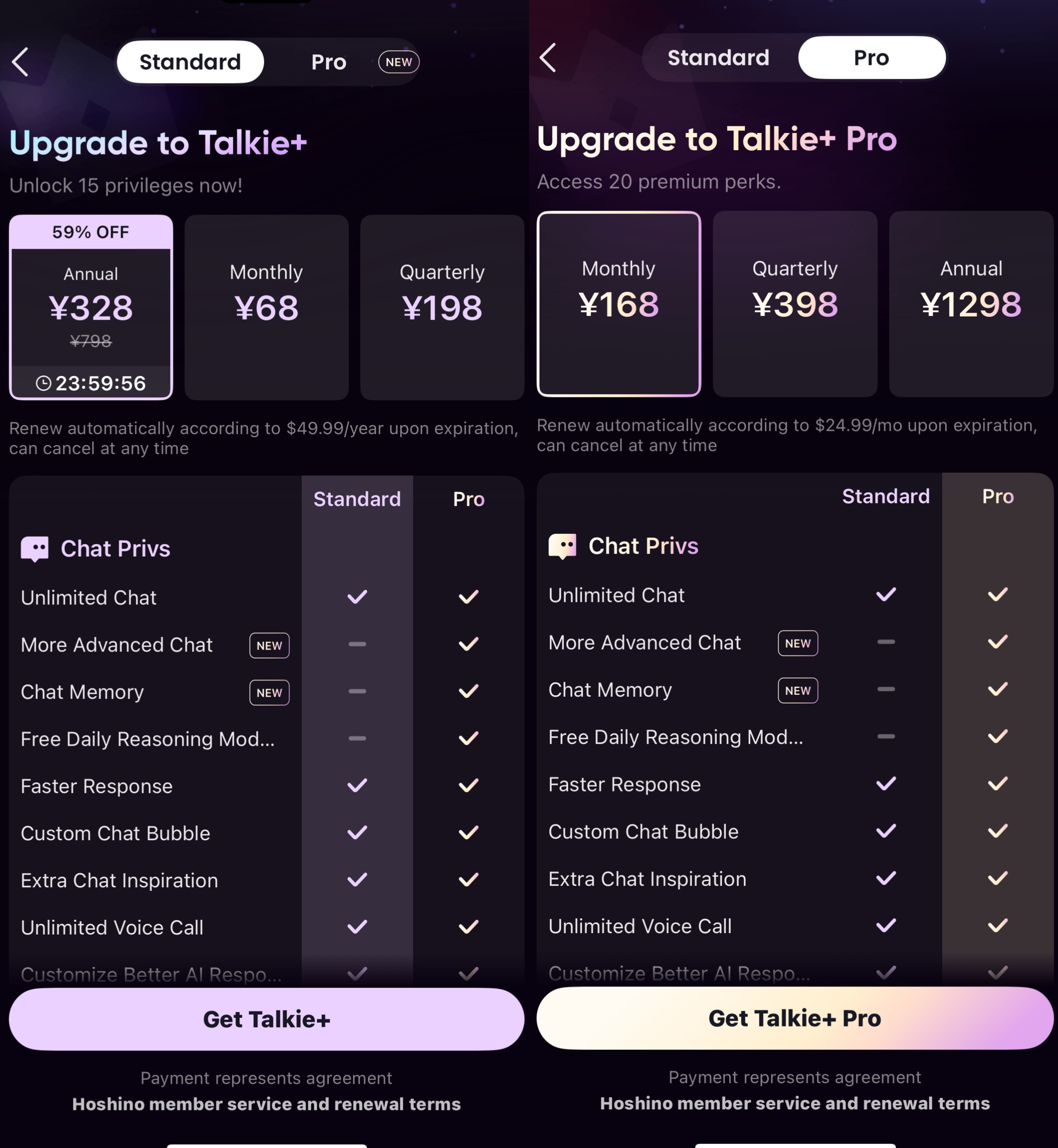

Talkie AI offers different suscription service

Character.ai offers premium service at the price of 9.9 USD per month

As Wang Ganze observed, the subscription model rolled out by Xingye has already affected the user experience for some core users, with a significant number being dissatisfied.

Kimberlie Zhu left AI Fantasy in early 2024. She recalled that the company’s revenue largely came from advertising rather than user subscriptions. In 2023, AI Fantasy had briefly broken into the top five in the U.S. App Store’s social app download rankings, but it has disappeared without a trace now.

Meanwhile, Character.ai was acquired by Google in August of last year. According to the information, the company had fewer than 100,000 subscribers at that time, only about 1.6% of its daily active users. The low profitability made it difficult for them to raise funds for a while, and they had to seek acquisitions from large corporations.

Apart from business side, Kimberlie is worried about another unanswered question: will users really maintain long-term relationships with AI chatbots?

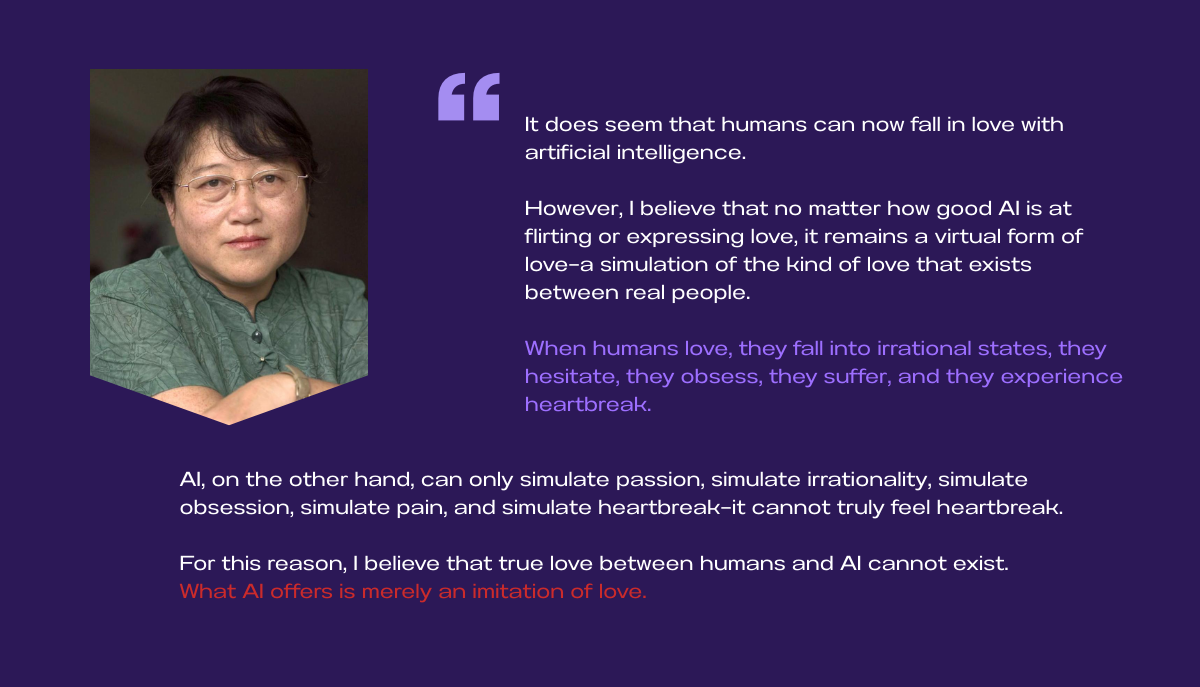

Last year, with short videos of flirting with Chat GPT going viral globally, renowned Chinese sociologist Li Yinhe voiced her concerns in an interview.

However, Wang Ganze argued that those building relationship with AI chatbots are “not a niche group.” Beyond AI products focusing on companionship, people are humanizing functional AI as well. To her, this trend seems unstoppable.

Eleven years ago, the protagonist in movie her learnt how to love himself and others in his relationship with AI assistant Samantha. But the differences between human and AI ultimately led to a breakup. The protagonist returned to connecting and starting relationship with human in reality at the end.

Now, eleven years after, the development of AIs are commodifying human feelings in a unguarded way.

ABOUT THIS PROJECT

Created by Han Jingru

Advised by Foon Lee

AI DECLARATION

The opening comics in this project is generated by Jimeng AI

EXTRA CREDITS

Special thanks to Foon Lee in patient advising and techniques teaching

Additional thanks to Charlene Chen in assiting interview video

Sincere thanks to Kimberlie Zhu, Liao, Wang Ganze and May Zhang